I continually get asked the same questions about SEO, so I decided to round up six SEO experts to get their take on these commonly asked questions. This may be a little long, but trust me there is SEO gold up in these hills! Grab a cup o’ joe and take 4 minutes to read through.

But first, let’s meet our experts…

|

AJ Ghergich, Founder of Ghergich & Co. – @seo“SEO & Content Marketing Expert. I Share Industry News That Is Actually Worth Reading!”

|

|

Phil Nottingham, Video Strategist – @philnottingham“Video Strategist. Theatrical misadventurer, Objectivist, Feminist and general enthusiast.”

|

|

Eric Enge, CEO Stone Temple Consulting – @stonetemple“Speaker. Lead Author of the Art of SEO. Columnist and contributor to Search Engine Land, Moz, and Copyblogger.”

|

|

Bryson Meunier, Director of SEO at Vividseats.com – @brysonmeunier“Experienced SEO and mobile search optimization expert. Search Engine Land columnist & speaker.”

|

|

Jason White, VP of SEO at DragonSearch – @sonray“Main food groups: SEO, cycling, coffee, laughs and highfives. Hustle, booty shaking and beer drinking. Tweets about SEO to the point my wife unfollowed me.”

|

|

Steve Slater, SEO at Vivid Image – @TheSteve_Slater“Don’t want to see tweets about SEO, the Seahawks, music, or MY daily life don’t hit the follow button… Don’t sweat new things, make old things better.” |

Q 1: Should we worry about duplicate content?

|

AJ Gherghich: |

This all comes down to scale. A little bit of duplicate content is 100 percent natural and will not hurt you at all. However, if you have duplicate content on a huge scale, avoid canonical tags. It can and will hurt your rankings potential.

I have seen sites benefit from cleaning up their site architecture and clearly labeling which pages should take priority.

|

Phil Nottingham: |

I think most people have no concept of what “duplicate content” really means, and how Google treats it. I’ve seen a lot of small businesses, albeit often small businesses with big websites, who have been harmed by Google Panda updates as a consequence of having a large amount of duplicate or thin pages.

Equally, there are a lot of companies panicking and worrying about having a few lines of duplicate text across an otherwise decent and high-quality site. You have to understand what Panda is and how it works. You also need to understand what it is that Google is trying to reward and/or devalue, where duplication is concerned.

|

Eric Enge: |

Let’s start by correcting a misconception:

Google has never “penalized” duplicate content. That’s not how it works.

Here’s what happens. Google will ignore all but one of the pages that it sees as a duplicate, and it may not pick the one that you want.

Also, two other things are true:

- Google is now spending time crawling duplicate content pages (pages it will never show in search results) instead of pages that it might rank. This can cost you in terms of having pages not get indexed, or getting indexed slowly, and Google not noticing changes you’ve made to pages for a long time. Those are not good things!

- You will have links pointing to these duplicate pages. The PageRank on those pages is largely wasted. This is not good for you either.

So yes, you should deal with duplicate content (if it’s actually duplicated content).

|

Bryson Meunier: |

Google says not to worry about it, as they can usually detect canonical versions. However, for competitive sites, I think it’s necessary to control as much as possible.

It’s true that Google will probably be able to detect it and handle it appropriately, but it’s not difficult to give them strong signals of canonical versions, using parameter handling in Search Console, the ahreflang tag, and the canonical tag. As SEOs I think it’s our responsibility to mitigate that risk as much as possible by providing these signals.

For most SMBs not in competitive industries, however, there are better things to prioritize than duplicate content.

|

Jason White: |

Large sites do just fine with duplicate content. The smaller sites weren’t marketing, and they were blatantly gaming the system.

I’m a big believer that SEO is cumulative and sites actually do better with a little dirt…the trick is to control where the dirt is. I think the type of duplicate content is important – Google can understand the difference between a site being scraped and when the site is regurgitating content to the far reaches of the internet.

Canonical, clean sitemaps and an RSS feed and you should be fine.

|

Steve Slater: |

I have seen a few sites, mostly eCommerce, struggle with having content that is very similar to competitors’ content, so when it comes to duplicate content, I tend to not poke the bear (Google). I avoid creating duplicate content on any site I work with. When I do create duplicate content, I’m always sure to use canonical tags, or noindex when necessary.

I’ve seen sites gain organic traffic after canonicals and nonindex tags have been applied. This is one of those areas that is pretty cut and dry to me.

There are fewer situations today where you need to create duplicate content. The days of chasing exact match keywords are over. To me, it’s more important to have your structured data and open graph tags in place.

The only situation I run into where duplicate content comes up regularly is when it relates to landing pages. Generally with a landing page, the goal is to convert a paid channel and not to rank organically, so it is never a concern to noindex or canonical a landing page.

Q 2: If you’ve lost the majority of your organic rankings due to a new site launch, what’s your best advice on regaining that traffic?

|

AJ Gherghich: |

Just last week I talked to a new client that did a major website redesign. They lost almost all visibility in Google shorty thereafter.

The issue was quite simple. Their design agency uploaded a robots.txt file that blocked search engines from the entire site. I have seen this happen multiple times. The design agency blocks search bots from the development environment and forgets to change it when they push the site live… doh! To avoid this, make sure they’re using a pre/post website checklist.

Another common issue I see is that people simply 301 their site and think they are done. Inside of Search Console, Google literally has a change of address function. You need to 301 everything properly AND tell Google and Bing your site moved through their official tools (at a bare minimum).

|

Phil Nottingham: |

In many ways… it’s a bit too late. A botched migration can be a real pain to fix, and so I’d advise any company to seek out professional help in advance of a migration rather than afterward.

|

Eric Enge: |

There are many possible causes for such lost traffic. One of the most common is that the redirects from the original pages to the new pages were not properly implemented. When this happens, the links that point to the old version of the site are no longer helping you rank, and you lose rankings.

The first part of the solution is to get the redirects (301 redirects only please!) put in place – the sooner the better. However, one solution that people often overlook, and that is definitely worth doing, is to approach the sites that link to your old pages, and ask them to update their links to point to the new pages. This takes the redirect out of the process, and it’s usually very helpful.

One more thing. Sometimes the nature of the problem is that the content has changed too much during the redesign. So if the pages that were ranking previously are no longer there, and the content is fundamentally changed, there is little that you will be able to do to recover, other than to recreate the content.

|

Bryson Meunier: |

When it comes to regaining lost site traffic, if it was for competitive keywords for which there is more than one highly relevant site that Google can return, the traffic may never get back to the levels it was originally.

SEO is like a race, in a sense. If you trip and fall you can get up and head toward the finish line, but competitors who haven’t fallen may be too far ahead for you to catch. This may be a reality that a website has to deal with, and it’s better to understand the truth and find alternative strategies than continue on the same path when you have no hope of winning.

Don’t give up before you do everything you can to fix the issue though. One to one redirects are essential, along with ahref tags for international sites. Refresh the XML sitemap if you haven’t done that already, use Bing and Google’s site move tools, and keep building links to the new site.

If you give it enough time and give them enough signals, the search engines should catch on, and return most of the traffic you’re highly relevant for.

|

Jason White: |

There are a few variables, but I would focus on indexing and maintaining a clean crawl. I’d attempt to update links that were pointing to the old pages so they went to the new pages without a redirect and I’d likely hit the ground running with a content/social strategy to find new opportunities while monitoring and reacting. It can be a long road regaining lost trust.

|

Steve Slater: |

I’m an SEO guy in a unique position of working at an agency with a team of account directors, web designers and developers who understand the importance of URL changes with new site builds. This often provides an advantage of avoiding URL changes when possible and preplanning for URL changes if it can’t be avoided.

With this in mind, the very first thing I do when a new site launches and organic rankings drop is check the MOZ algo change record. I was burned once. I spent a lot of time trying to figure out what was wrong with the redesign, and when I eventually checked the algo change record it was very clearly a Panda issue.

So step number one for me when organic rankings drop is always to check and see if it is possibly anything outside of my control. Once you rule out an algo change, it’s important to review Google Search Console and Bing Webmaster Tools and ask yourself these questions.

- Has a sitemap been submitted?

- Has your site been indexed?

- Are there crawl errors being reported in Search Console?

If a sitemap has not been submitted, create and submit a sitemap. If the site has not been indexed, look for errors in your sitemap.

If you have crawl errors, this might be part of your problem. Crawl errors are created when search engines navigate to URLs that no longer exist.

Generally, when a new site is launched, the URL structure is the last thing considered. Many site owners sabotage their rankings by changing page URLs without realizing that the URL is holding all of that page’s value. By changing the URL, you are basically starting that page over again.

If you need to change the URL structure, your next best option is to redirect your old page to your new page. This is always option two because some of that page value will decay as it passes through a redirect.

When the URL structure has changed and nothing has been done, you will find lots of crawl errors in your Search Console. Be sure to redirect these crawl errors to the most appropriate new page. Resist the temptation to redirect all of them to your new homepage.

Q 3: If you have a list of 100+ phrases and they’re all relatively similar, what considerations do you take in choosing a phrase for a page?

|

AJ Gherghich: |

Google’s Hummingbird update made it so much easier for Google to understand what your page is about.

You no longer need to try and use 12 versions of a keyword phrase. I highly suggest writers create copy with zero thought toward SEO.

Once the writing is complete, recheck that copy. Usually the writer will have naturally used several of the keywords you want. If not, make some slight tweaks and you are set. A tool like Keywordtool.io can be very helpful. To get an idea of the issues your users are facing, try a search like: How or Why + Your Keywords.

Once your copy is set then you can get a little more SEO specific with the title tag. Just make sure your title tag is highly clickable for humans as well as optimized for search.

In general your title tag should have your most important keywords listed from left to right. However, that does you no good if nobody clicks your listing when it ranks.

|

Phil Nottingham: |

Group the phrases by intent, and create a page for each of the dominant intentions. It’s often bad practice to try and heavily optimise a page for one specific query. You should be thinking much more holistically about user experience and what the specific page is trying to solve.

If you get things right with the copy and general targeting, you should be able to rank for the majority of important searches.

I suppose I reject the very idea of “picking a phrase for a page.”

|

Eric Enge: |

Tough question, as there are lots of “devil is in the details.” to it. One part is to figure out if there are enough difference in the variants of the phrases that you can create 5 pages. Only do that if there is enough differentiation between the content on the pages to make sense to real users to have separate pages.

But let’s say you have a large number of phrases, and they are all choices just for that one page. The most important input is probably the one that will make the most sense to users on your site.

If you are not sure of that, search volume on that phrase is ONE input you can use to figure out what might make most sense to potential customers. Another thing you can do is test different phrases for the page, and see which one converts the best, or results in the best combination of traffic and sales.

|

Bryson Meunier: |

I’m trying to imagine a scenario where we’ve had 100+ phrases that are all similar enough to put on the same page. Mostly a list of 100+ phrases will follow the long tail model and one phrase will be the head term that everyone searches on, and the other 99 phrases are slight variations of that head term.

If the site has enough authority it can rank on all 100+ terms just by creating relevant content for each term, and linking them together in a way that is meaningful to the search engines.

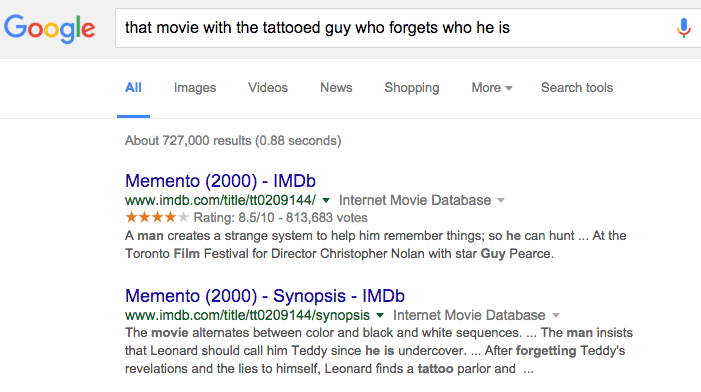

For example, if you are a retailer like Best Buy, and you sell laptops, you need to keep in mind that these are the related searches that Google lists for the term:

Additionally there are some brands that still call their laptops “notebooks”, and some that call them “chromebooks.”

How should Best Buy assign all these keywords to content? Usually by considering the search volume, the user intent, the competitiveness of the space and the connections between concepts and picking a few keywords that they have a chance at ranking at.

SMBs will probably pick longer tail terms that are still qualified and attempt to rank for those, while working for a term that has more volume.

The answer will be different for every business, depending on authority, relevance and resources; but those are the basic considerations I take.

|

Jason White: |

I ask myself…

- What is the goal of the page and how does it relate to the searcher’s intent?

- What type of content is doing well for these phrases and what is the competitive nature of those verticals?

It’s important to consider where the phrase fits within the buying cycle and what type of content or experience should be served at that touch. I think most are using strategies that bundle keyword phrases but the next step is to understand your personas and how the user journey relates to your keyword bundles.

|

Steve Slater: |

The users intent is always the biggest consideration.

Like I said earlier, the days of exact match keywords are over. If you have 100 phrases that are all relatively similar they should be based around the same user intent.

My goal at this point is not to work in as many different variations of the keyword that I possibly can. My goal now is to create the greatest piece of content that I possibly can. This content should not only apply to the users intent, but should anticipate other questions that a user has before they know that they have them.

There is a great example of this in the SEO world. If you search for, “how long should a meta description be?” or “meta descriptions length” or “meta description best practices” you will probably see this page in the top 10 organic results.

The team at MOZ not only answered the user intent for all of those questions, and more, they have also anticipated questions the user didn’t even know that he or she had yet. If all SEO professionals focus on creating content that creates this kind of value, instead of creating content that will rank, the web would be a better place and we would have happier clients.

Question 4: If you have a brand new site, what’s your best advice for building Domain Authority and increasing your rankings?

|

AJ Gherghich: |

I would not launch a new site without a content strategy. You must have a reason for people to link to you. To start with I would put 80 percent of my best content on other people’s sites. Think industry leading magazines, thought leader blogs, etc…

As your blog and site mature, slowly reverse those numbers until only 20 percent of your content goes outside your blog.

|

Phil Nottingham: |

Spend a lot of money on one good creative piece, rather than try to do lots of blogging or “infographics.. Distilled recently worked with a new brand launching a B2B software site and through a single good creative piece, they were able to get 655 new linking root domains to the new site.

|

Eric Enge: |

The most important point is that it’s not about the quantity of links, it’s about the quality of the links. One great metric for evaluating link quality is to ask yourself whether or not you might actually get referral traffic from them. The other major consideration is to realize that the best “link building” campaigns are those that focus on reputation and visibility first. It’s critical to internalize that.

What would you do to build your brand and reputation if there were not search engines? That’s a pretty good proxy for how to start thinking about building Domain Authority.

The other major element is to realize that you MUST publish content that is world class for your market space. You won’t get it done with garden variety content. You need to be creating content that will withstand the scrutiny (and gain the acclaim) of other subject matter experts in your market space.

|

Bryson Meunier: |

Build relationships with people and link them to the digital world in a way that is authentic and relevant. And don’t worry too much about scale.

More important things to worry about if you have a brand new site:

- The product/service: is your business model better than your competitors?

- The accessibility of your site: can a search engine add every unique page to the index, or are there technical roadblocks preventing the process?

- The relevance of the content to searcher queries.

- The compliance of your site to Google’s webmaster guidelines: are you doing something (intentionally or no) that will get you de-indexed?

Once all of that is taken care of, any efforts that you put forth toward increasing overall link equity will have a better chance at succeeding. Without them none of your domain authority will matter.

|

Jason White: |

Be real and master your channels before moving on. It’s all about building trust and showing that you follow best practices early on. If you don’t and you do many things poorly, it will take a long time to rebuild trust.

Working with luminaries in your vertical can help build links and earn social shares quickly so be sure to be a helpful, giving member of the communities around you.

|

Steve Slater: |

Create a great user experience.

Recently I was at an event and Wil Reynolds of SEER was the keynote speaker. He asked us all, “if you took all of your content down today, would anyone miss it?”

That’s a gut check kind of question. I think this question really makes you consider where the industry is headed. Let’s forget about Google for a second. Think about your audience.

We are now speaking to a very savvy audience. People are connected to the web almost all day. Therefore, imagine for a second that I told someone how to fake every ranking signal and promised them number one rankings for life. Would that matter? Chances are they would look at the rankings and consider themselves done.

When users get to their page would they want to be there? Would they be thankful that they found the page? Would they share the page? Would their questions be answered?

Your best bet to increasing your rankings and domain authority in the SEO world of today is have faith in your brand and create content that reinforces that. Care about your content and have the goal of making it the greatest thing on the web not just with the goal of having it rank.

So many brands take the, “let’s get this done and ranking” approach to creating content when they should be taking the “let’s make this amazing!” approach.

Question 5: Besides creating quality unique content, what’s your best tip to boost search rankings?

|

AJ Gherghich: |

You need really strong site architecture and on-page optimization. When you pair this with awesome content great things happen. You also need to expand what you think of as content or a link building opportunity.

You can easily turn a job posting, in-person events, and vendor relationships into links. A simple tool that monitors unlinked brand mentions can become one of your best link building drivers.

|

Phil Nottingham: |

Create exceptional content. “Quality” content typically won’t cut it any more. Your content can’t just be in the top 50% of other offerings out there, it needs to be truly exceptional to get traction.

|

Eric Enge: |

All the Domain Authority in the world won’t mean anything if you don’t succeed in creating a website that is a great experience for users. You’ll get the “Domain Authority”, but not the traffic. Google is doing increasingly more to evaluate whether or not users will actually find what they want when they send them to your site.

Treat the overall usability and value of your site is now a critical part of SEO. Don’t overlook it!

|

Bryson Meunier: |

I know a lot of SEO consultants are all about transparency post-Moz, but in my 15 years doing professional SEO I’ve never given my best tips to people who don’t pay my salary. That said, generally the best thing you can do to boost search engine rankings is value data over opinion.

There are many people in this industry whose opinions I respect, but when it comes to their predictions about SEO most of them have the same predictive accuracy as a coin flip. Spend less time reading SEO blogs, and more time testing best practices to see if they apply to your business.

The trend toward data science in SEO as evidenced by Pinterest and others is a good one, I think; and one that could help a lot of brands discover SEO truths that no one else who isn’t testing their site can really know.

|

Jason White: |

Clean link building chased with solid social signals; I try to group activities so I can send positive signals from multiple channels. Successful content won’t have 100 links and only 3 social shares naturally. Signals need to be congruent and from multiple sources to earn trust and to achieve long term rankings. They also can’t be earned over a short period of time so it’s important to keep at it and space actions out in a rational way.

|

Steve Slater: |

Build a strong brand. If your audience knows who you are when they hit Google, they are more likely to click on you and trust you. Make them search for you by name, and your SEO will never be better.

Thanks to all of the experts that weighed in. If you want more advice here are more SEO tips.

What do you think? Agree? Disagree? Let us know with a comment below.