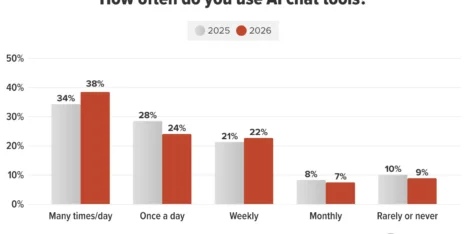

Your future prospect is doing a little research, looking for a new partner.

Who do they find?

Do they find you?

Did AI recommend your brand?

The answer is partly shaped by sources that aren’t your website. Yes, your website is your first and best opportunity to train the AI to recommend your brand, but AI trains on the entire web. So off-site sources matter.

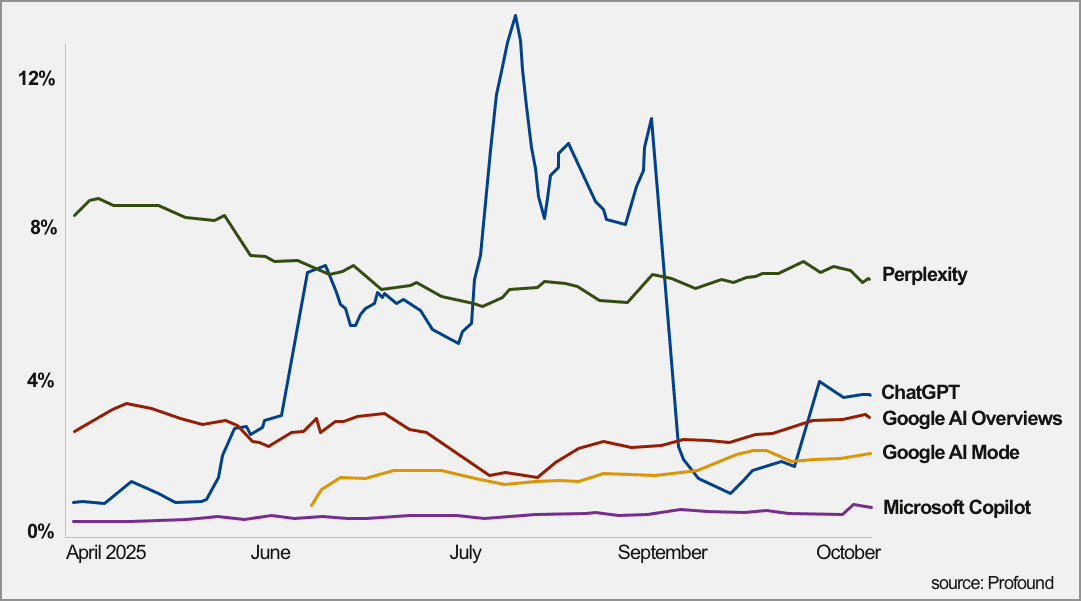

SEOs all know this and we’ve all seen the research that AIs cite some websites more than others. Maybe you’ve heard, Reddit is one of the most commonly cited websites in AI responses. Here’s some data from Profound.

But does that mean every brand needs a Reddit strategy? I’m not so sure.

But does that mean every brand needs a Reddit strategy? I’m not so sure.

Just because Reddit (or YouTube or Wikipedia) is a common citation doesn’t mean the AI relies on it to respond to your future prospect when they prompt.

Reddit (or any source) only matters if it AI checks it when your buyer searches for brands in your category.

The sources that influence AI responses are category-specific and query-specific.

Saying every brand needs a Reddit strategy is like saying every brand needs a Facebook strategy. Facebook is popular therefore every brand needs to be on Facebook. Of course, that’s absurd.

Don’t chase every visible citation source. Instead, identify which external sources consistently shape AI answers for your buyer’s actual use cases, then prioritize the channels that you can realistically influence.

So don’t start with the sources. Start with the prompts.

Today, we’ll peek into the AI training data to see which sources appear to shape AI answers. You’ll learn that those sources are discoverable and practical to assess.

Do to that, we’ll use a four-step process:

- Predict the prompts that your buyer uses when researching your category (ChatGPT)

- Run those prompts in Google (Gemini or AI Mode)

- Archive the responses and sources for those responses (Gemini or AI Mode)

- Analyze the sources that influence responses and prioritize next steps (ChatGPT)

Why can’t we just do this all in one model?

You can. It can all be done in Gemini but the analysis isn’t as good. Better to use two models, where one analyzes the other.

Doesn’t our prompt tracking tool do this?

Maybe. It has the reports, but not the analysis. We set up prompt tracking for all of our optimization clients. If you have one of these tools, export the top source URLs (exclude your competitors) and skip to prompt four below.

When you’re done, you’ll have a mini-prioritized strategy for off-site AI search optimization. There’s a screenshot below of what it will look like.

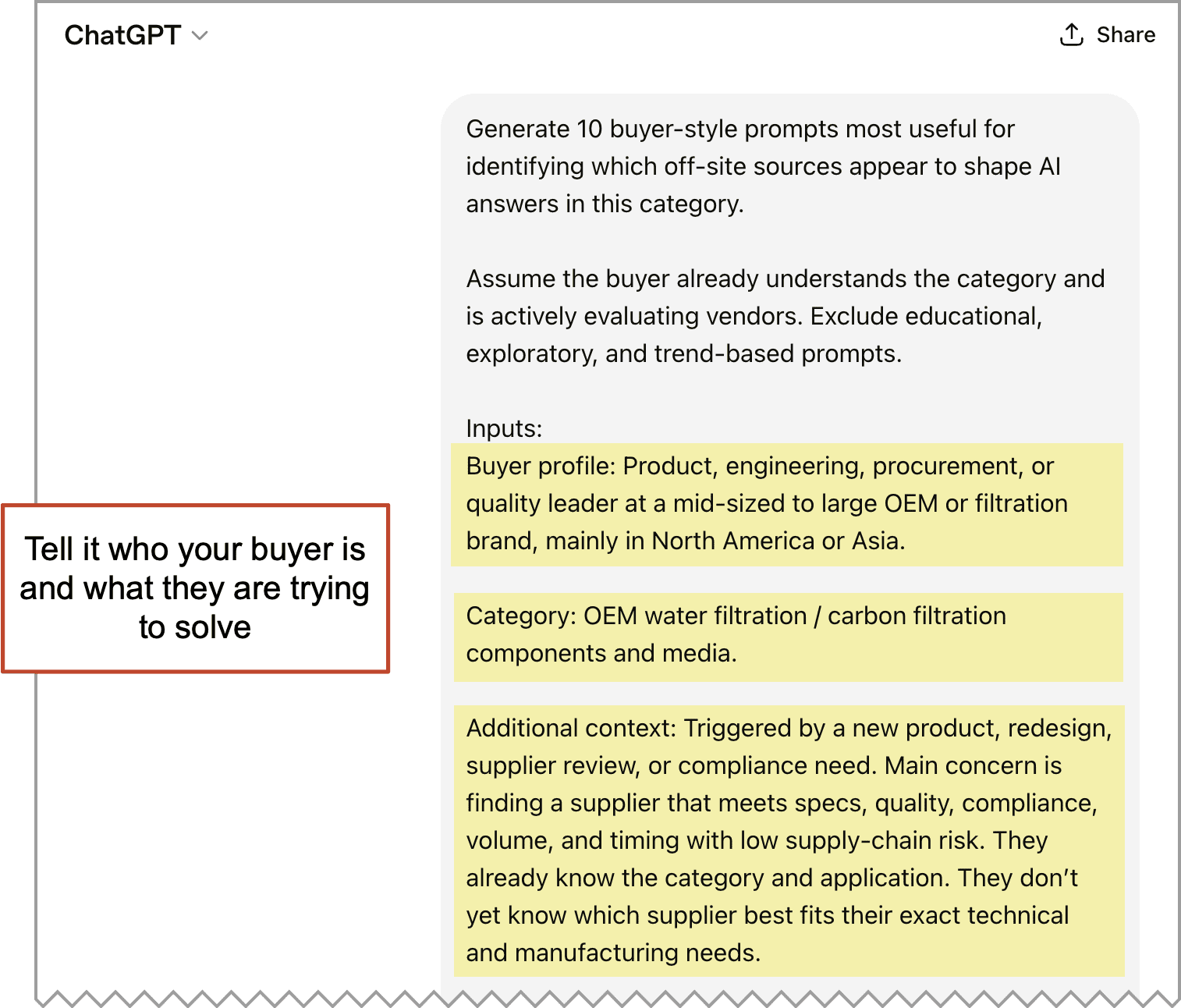

Step 1: Generate Buyer-Specific Commercial-Intent Prompts

This prompt predicts the likely “buyer-intent prompts” which we need to uncover which off-site sources shape AI answers. It creates realistic buyer-intent prompts that sound like something a real prospect would actually type into an AI tool while evaluating brands like yours.

The inputs are very important. You need to tell it about your buyer. If you give it the right details, the predicted prompts will be more accurate. If you have defined ICPs, personas, keyword data or market research, pull from those.

- Buyer profile: Job titles, company size, geography and anything else

- Buyer industries: The verticals they work in

- Additional context: What problem are they hoping to solve? What sent them looking for help? What do they already know about what they need?

Pro Tip: If you’re struggling to fill this out, try this prompt to get you started: “Visit [website] and infer the most likely ICP. Then list the buyer profile, industry and additional context. Keep the total response under 90 words, use compact phrases (no paragraphs) and skip the explanation and commentary”

Review carefully, edit, then plug those details (probably 100 words at least) into the top of this prompt.

Buyer-Specific Prompt Generator Prompt (ChatGPT)

Generate 10 buyer-style prompts most useful for identifying which off-site sources appear to shape AI answers in this category.

Assume the buyer already understands the category and is actively evaluating vendors. Exclude educational, exploratory, and trend-based prompts.

Inputs:

- Buyer profile [job titles, company size, geography]

- Category: [their industry]

- Additional context [what triggered the research, primary concern, what they already know, what they don’t know]

Choose prompts that span key buying stages such as discovery, shortlist, comparison, validation, implementation risk, and ROI.

Write each prompt like a real buyer question: short, natural, and commercially specific, usually under 12–15 words. Avoid long setup, extra detail, and multi-part instructions. Include only details a buyer would realistically mention, and prioritize variety over near-duplicate comparisons.

For each prompt, include:

- Copy/Paste This Prompt

- Buyer Intent

- Decision Criteria Reflected

- Likely Source Types Surfaced

Append this instruction to every “Copy/Paste This Prompt” entry:

Use current web information. After answering, include:

- Brands mentioned

- Third-party sources referenced

- A plain list of specific supporting links or publications, one per line

Also include a short explanation of why these prompts were chosen, brief notes on likely source diversity across the set, any missing or overrepresented stages, and any prompts intentionally deprioritized.

Formatting rules: The response should italicize the text in the Copy/Paste This Prompt section and put it in quotes. Copy/Paste This Prompt must appear first for every prompt and its content must be a single clean paragraph, not bullets. The user should copy and paste only the text in that section into another AI tool. Keep the other fields concise and easy to scan, and keep the output clean and easy to review one prompt at a time.

The prompt should look something like this. Those details are used to predict the prompts your buyer uses to research your category.

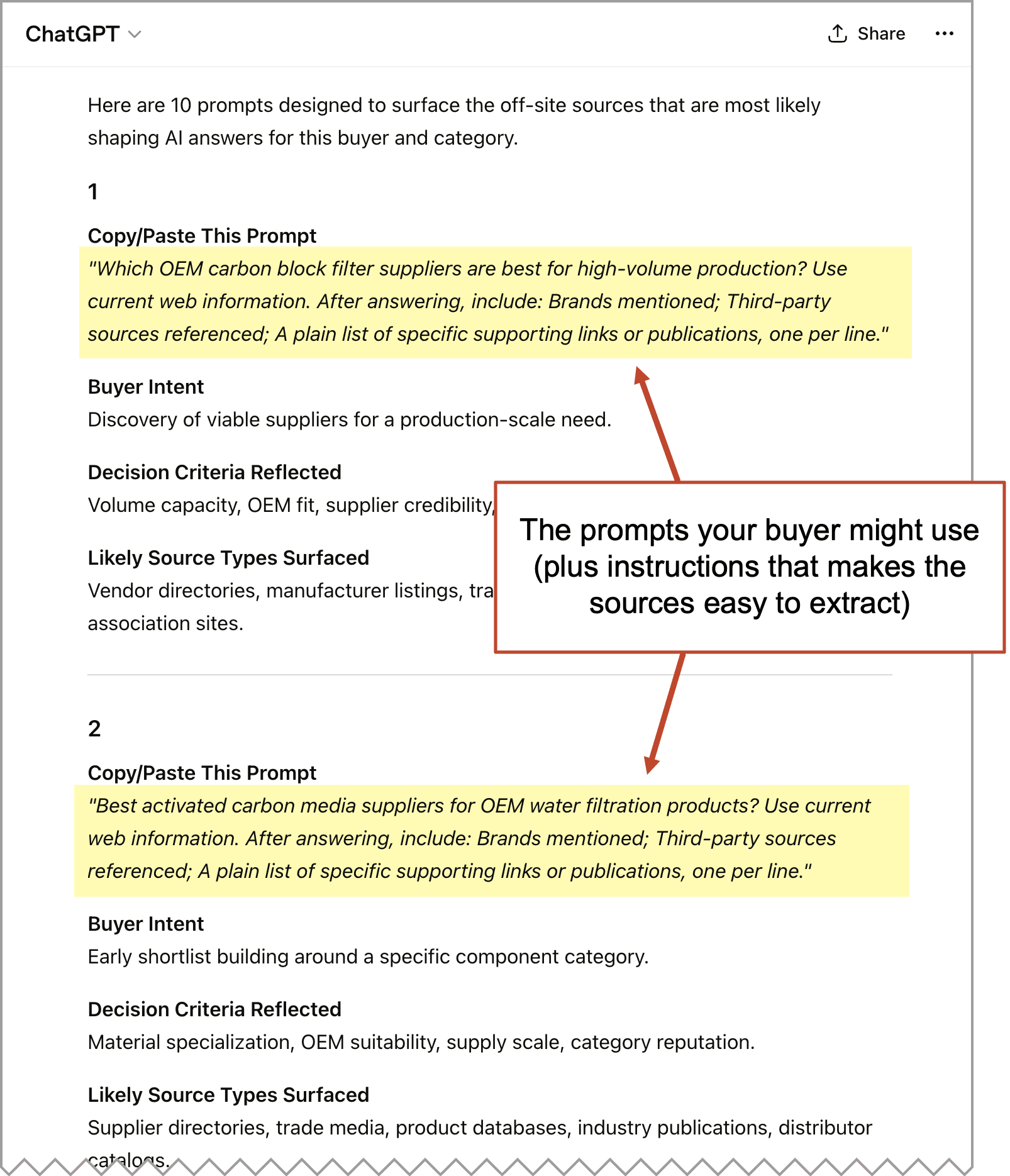

The response includes 10 prompts that your buyer would likely use to find companies like yours. Within each prompt is a small additional instruction that will make the sources easy to extract for off-site AEO analysis.

The response includes 10 prompts that your buyer would likely use to find companies like yours. Within each prompt is a small additional instruction that will make the sources easy to extract for off-site AEO analysis.

It will look something like this:

Notice that the prompts don’t include any brands. We want to see how it responds, who it finds and what sources it uses when we start fresh. We are emulating the experience of a non-brand aware buyer.

Notice that the prompts don’t include any brands. We want to see how it responds, who it finds and what sources it uses when we start fresh. We are emulating the experience of a non-brand aware buyer.

Now you’re ready to proceed with the 10 “prompt runs” in Google.

Step 2: Ten “prompt runs” in Gemini or Google’s AI Mode

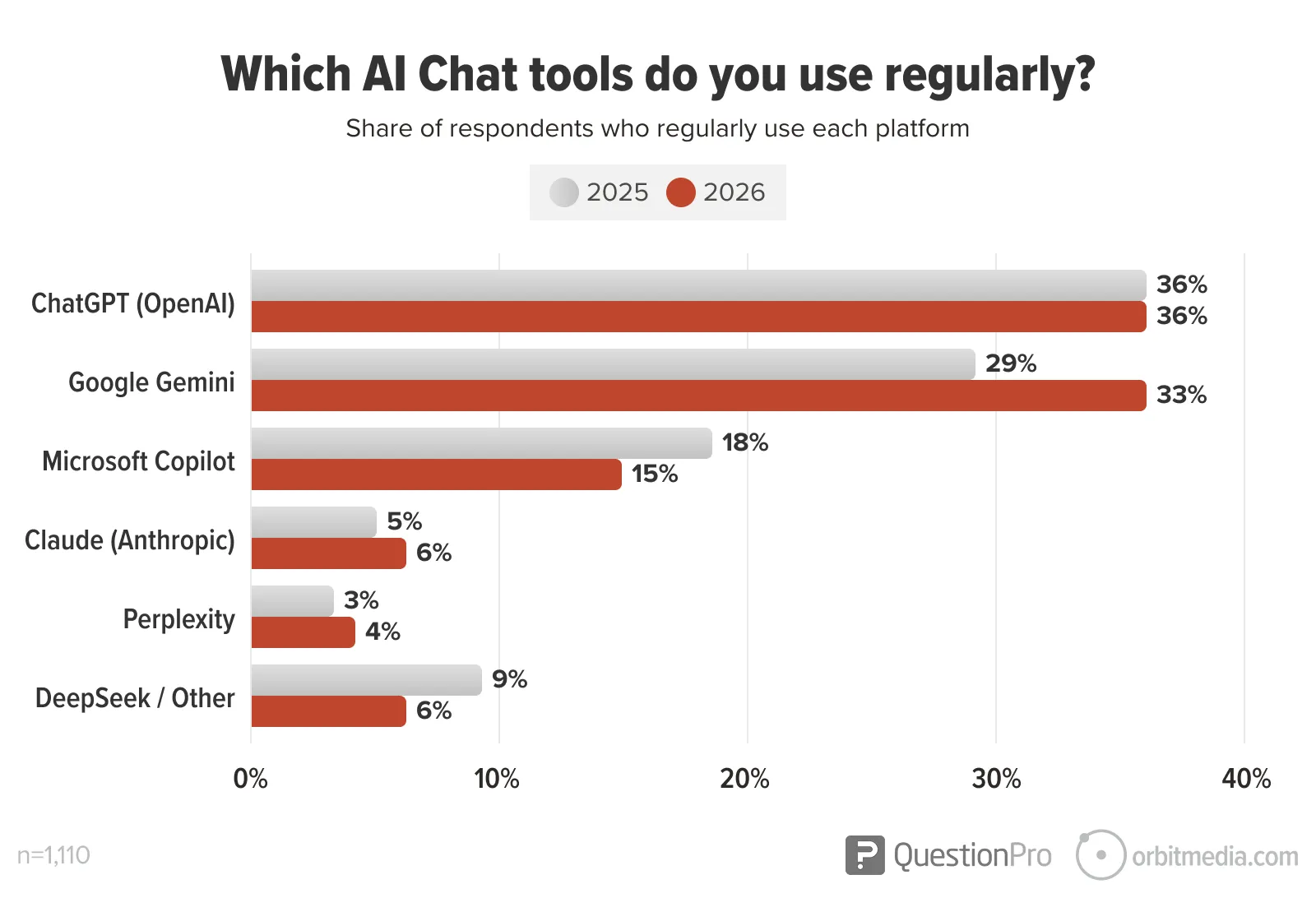

We recommend Google because it is dominant and a popular B2B research tool for buyers. Gemini may not be the most popular AI chatbot, but it’s catching up fast. Google Search is pushing AI hard (AI mode and AI overviews) so it’s likely to become even more important. But you could use this method for any LLM. Try them all, if you’d like.

Now comes the tedious part.

Now comes the tedious part.

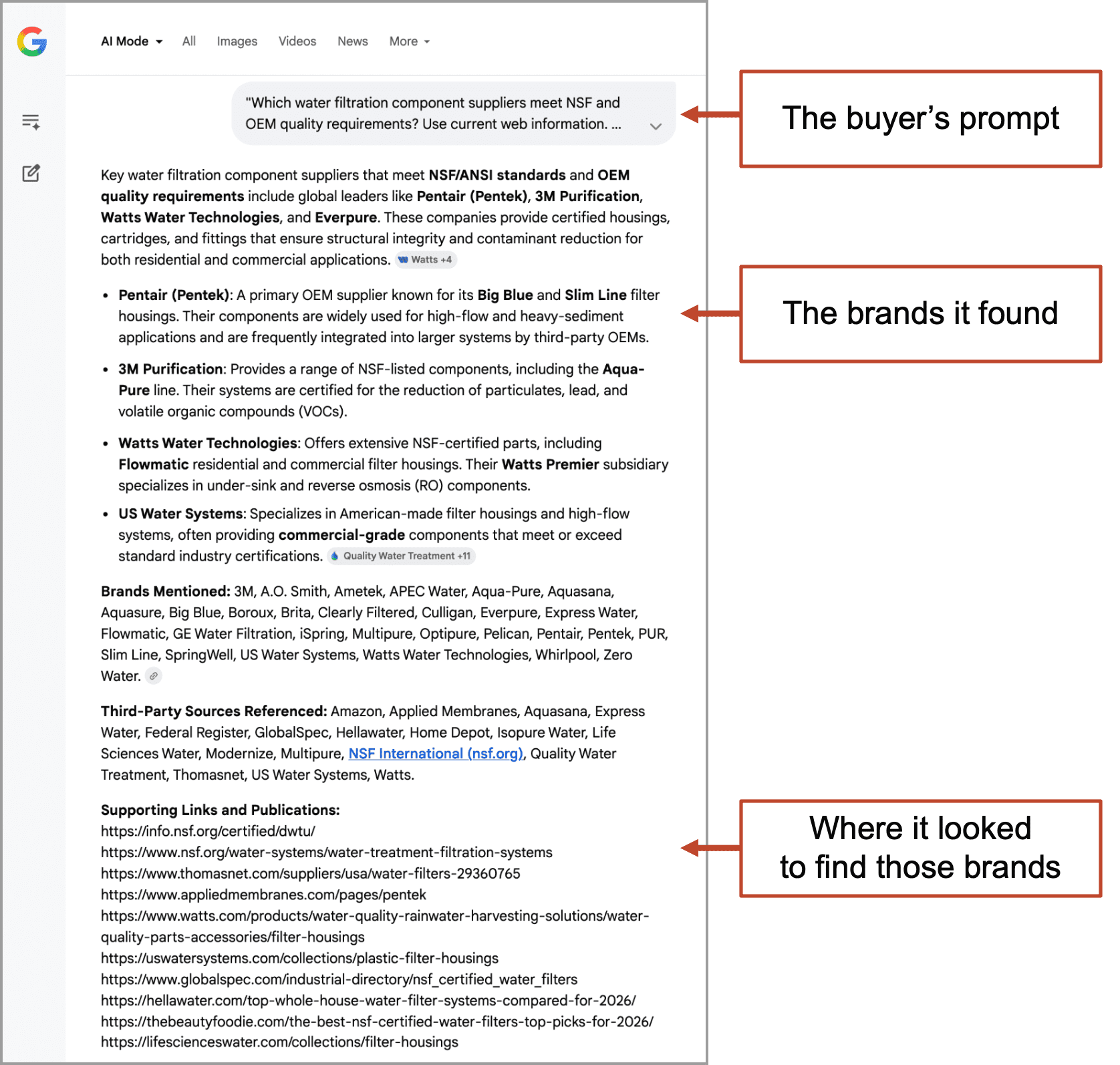

Copy and paste the first of the 10 prompts (the highlighted area in the screenshot above) into Gemini or Google’s AI Mode. Send it. Then copy and paste in the second. It will be in one long conversation. Each prompt and response will look something like this:

Certainly, there are more comprehensive ways to do this audit and you can absolutely take this method further if you have time.

Certainly, there are more comprehensive ways to do this audit and you can absolutely take this method further if you have time.

- Use a larger sample: Do more than 10 prompt runs

- Use more LLMs: Go beyond Google and run prompts in other models

- Do prompt runs in multiple, separate conversations: Doing it all in one conversation may create an “AI momentum” problem where subsequent responses are affected by previous prompts, causing the results narrow and possibly missing important sources.

But we don’t need a comprehensive audit to spot the patterns and get quick ideas for action. This is enough to proceed with our analysis.

|

Britney Muller, Founder, Organe Labs“The ’10/10 runs’ approach is a solid instinct, because AI outputs as you know are non-deterministic. The same prompt can give you different answers each time. Ten runs give you a better, but still a very crude directional signal. It’s really not statistical certainty.” |

Step 3: Create an archive of the responses and sources (one final prompt in Gemini)

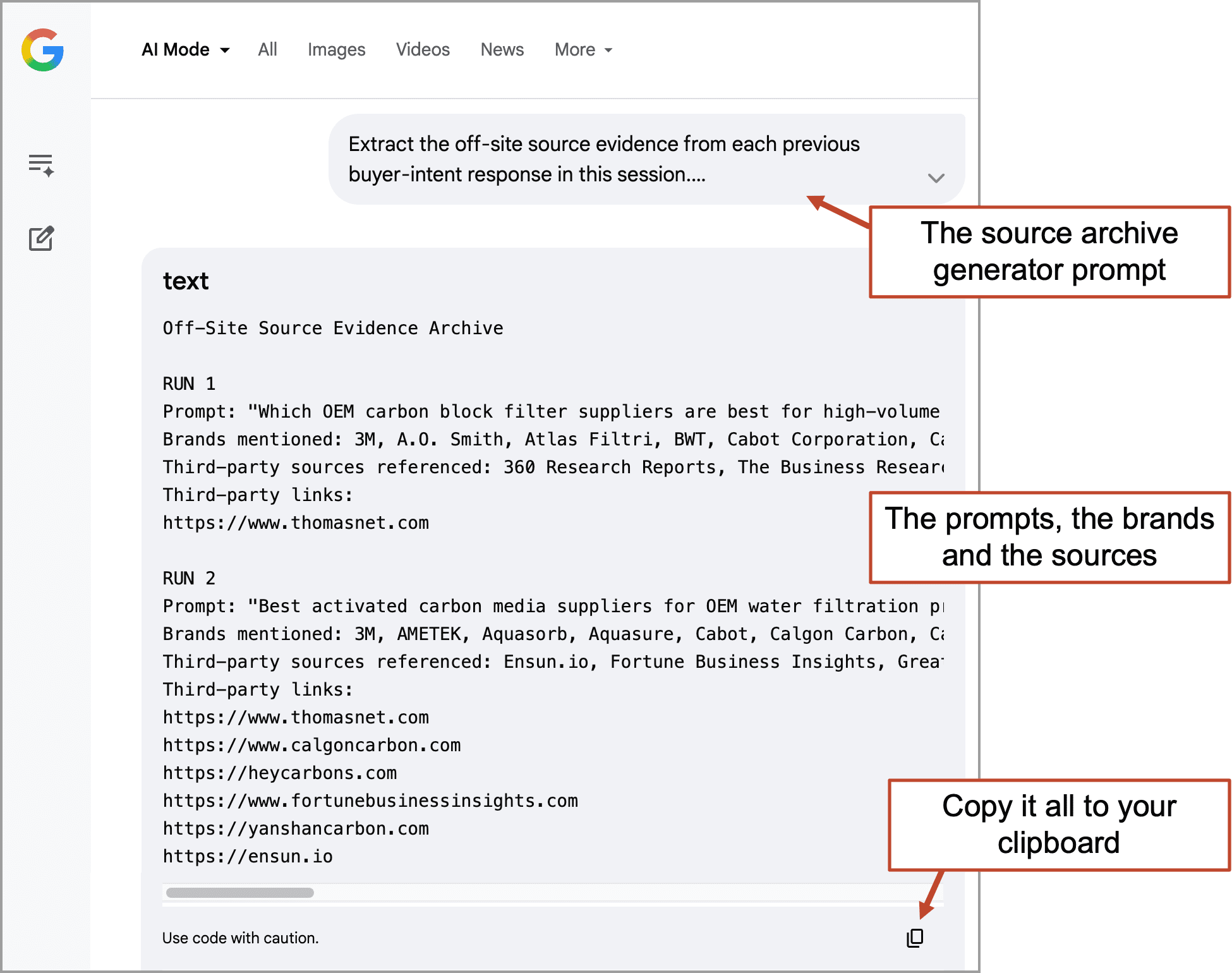

Now that you’ve completed the 10 “prompt runs” in Gemini or AI Mode, it’s time to pull out the sources. We’ll use another prompt to distill down just the details we want: the prompts, the brands in the responses and the sources the AI used.

This prompt will put them all together into a simple plain text archive that you can copy to your clipboard. Just enter the prompt below into the same conversation with Google, right after the last of the 10 prompt runs.

Note: This works perfectly in Gemini, but in tests within Google AI Mode, I had to run this prompt twice. That tool is really an extension of a search engine, not a typical generative AI. If it doesn’t work the first time, just try it again.

Source Archive Generator Prompt (Gemini or AI Mode)

Extract the off-site source evidence from each previous buyer-intent response in this session.

Start the output with this exact title on the first line: “Off-Site Source Evidence Archive”

For each response, create a clear “RUN [Number]” section and include only:

- Prompt

- Brands mentioned

- Third-party sources referenced

- Third-party links

Do not summarize, rewrite, or add analysis. Copy the content as written where possible. Preserve all links. Return the final output in a single code block.

This prompt removes all the noise and provides you with just what you need: the prompt, the brands that were surfaced and the sources it used to surface those brands. Now it’s all in a neat little archive. Perfect for our analysis.

Copy and paste the archive to your clipboard.

Step 4: Discover which off-site signals show up the most (and what to do next)

Step 4: Discover which off-site signals show up the most (and what to do next)

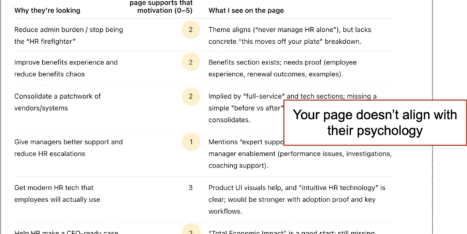

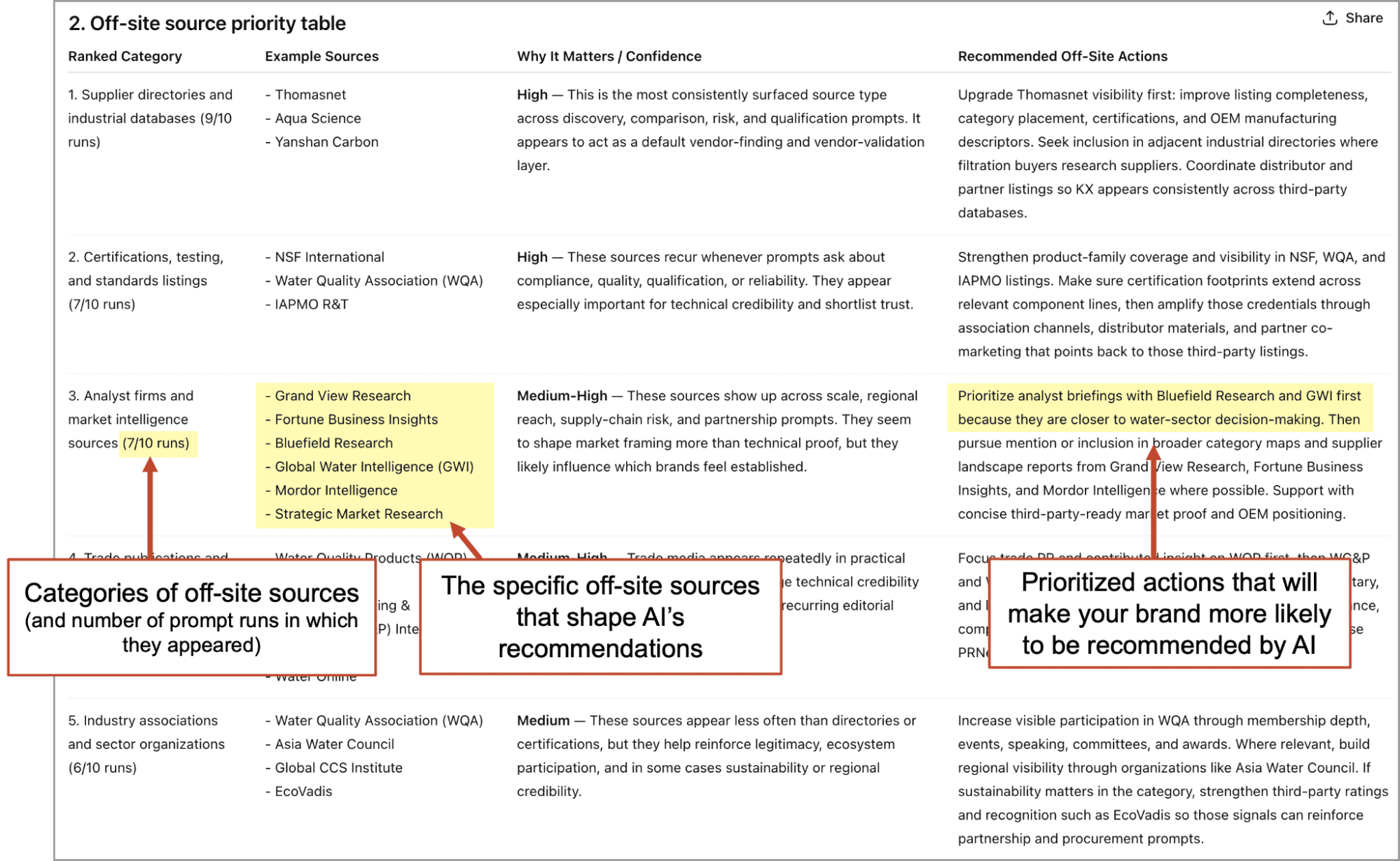

Our final prompt takes the archive and identifies, categorizes and prioritizes the recurring third-party sources. These are the off-site AEO signals that inform Google’s recommendations when your buyer researches your industry.

It also puts the audit in the context of your own brand. Did you show up in the responses? To find out, enter your brand at the top of the prompt.

You could run the final prompt right in the same conversation with the prompt runs (Google Gemini or AI mode), but in our tests, the analysis was better in ChatGPT. So we recommend going back to ChatGPT and continuing the conversation you started with the first prompt above. I’m sure Claude would also be great for this.

Here’s the prompt. It’s a doozy. Paste in the source archive from your clipboard along with this prompt.

Off-Site Source Influence Audit (ChatGPT)

I’m pasting an archive of completed buyer-intent AI responses below. Analyze it to identify which off-site sources appear most likely to shape AI answers in this category.

My Brand: [company name]

Treat this as an observed-pattern audit, not a definitive map of model influence. Base conclusions on recurring surfaced sources, recurring source types, repeated brand visibility, and repeated patterns in how evidence is presented across the runs. Use My Brand as a secondary interpretation lens, not the primary basis for the audit.

The archive is the primary evidence base. It may be inconsistent, repetitive, or uneven in evidence quality. Some cited items may be standards, agencies, regulations, analyst firms, review sites, publications, directories, or vendor pages. Some “third-party sources referenced” may be inferred in the response rather than clearly evidenced by linked support, so distinguish observed evidence from inference.

Return:

1. Key patterns

Briefly summarize the most important recurring source, source-type, and brand patterns across the runs.

2. Off-site source priority table

Create one markdown table ranking the top 5 off-site source categories most likely shaping AI answers in this category.

Use these columns:

– Ranked Category

– Example Sources

– Why It Matters / Confidence

– Recommended Off-Site Actions

In Ranked Category, combine the rank, category, and visibility into one cell using this format:

[number]. [source category] ([x]/[y] runs)

In Example Sources, list 3–6 specific named sources from the archive whenever possible, such as actual directories, publications, review platforms, analyst firms, associations, or partner ecosystems. Prefer exact source names over generalized labels. Do not use vague labels like “category-specific directories,” “verified review platforms,” “news-oriented ranking posts,” or “platform ecosystem references” if a specific named source is available in the archive. Only use a generic source label if the archive clearly suggests a source type but does not name a specific source. Format the contents as a short bullet list within each cell so the examples are easy to scan.

In Why It Matters / Confidence, start with a short confidence label such as High, Medium, or Low, then briefly explain why the category matters. Rank categories by likely off-site influence and recurring visibility across runs, not by confidence alone.

In Recommended Off-Site Actions, give only practical off-site actions that increase third-party visibility and credibility in AI answers. Prioritize analyst outreach, directory inclusion, review generation, trade PR, association participation, awards, partner amplification, and community presence. When possible, make the recommendation source-specific so the next step is obvious. Reference the named sources in that row rather than giving only generic advice.

3. Competitive readout

Briefly summarize which brands appear most often, which seem most supported by third-party signals, any smaller brands that appear to overperform, and any brands that seem boosted mainly by brand-owned citations rather than third-party support.

4. Brand gap readout

Using My Brand as the reference point, briefly summarize how often My Brand appears across the runs, which off-site source categories seem to support My Brand most, where My Brand appears underrepresented versus competitors, and the top off-site opportunities most likely to improve My Brand’s visibility.

5. Evidence quality notes

Briefly note patterns that may weaken confidence, such as repeated vendor-owned links, repeated uncited claims, standards used as credibility signals without direct evidence, low-quality sources, or duplicated source patterns.

6. Prioritized action plan

Give a short prioritized action plan based on the observed off-site source patterns and My Brand’s visibility gaps. Include the top 3 highest-impact off-site actions to take first, why each action ranks where it does, the expected visibility benefit of each, and any important dependencies or sequencing.

Use the archive silently as background input and do not surface the pasted file, file name, badge, attachment, or source chips anywhere in the output. Focus recommendations on off-site actions only, not on-site publishing, website updates, or brand-owned content as primary actions. Prioritize recurring third-party signals over one-off mentions, and treat brand-owned and competitor-owned sites as contextual rather than primary evidence.

Here’s what the analysis will look like:

These are the sources that influence AI recommendations in your category.

Suddenly, this key component of your AI search strategy becomes more clear. This mini-report lays out next steps and which internal team or outside partner may do the work.

- If AI is using review sites to train on your category…

you can influence responses through outreach to fans and reputation management. (usually handled internally as part of customer marketing) - If AI is using directories to train on your category…

you can influence responses through submission and management (usually handled by the in-house marketing team, so you’ll have the logins and can manage your listings forever) - If AI is using trade pubs and associations to train on your category…

you can influence responses through content marketing collaborations and influencer marketing (usually handled in-house, but can be outsourced to a PR firm) - If AI is training on analyst reports to train on your category…

you can influence responses through outreach and requests for inclusion (usually handled in-house, but can be outsourced to a PR firm) - If AI is training on “listicles”to train on your category…

you can influence responses through outreach or guest posting (often handled SEO or digital PR firm)

Notice how some of the actions could be best handled by a partner, such as an SEO agency or PR firm, and some are probably best done in-house.

I suspect you get a lot done in-house before outsourcing. If you’d like an action plan with detailed playbooks for each, just ask the AI in the next prompt. Tell it all about your internal resources, current partners and budget.

|

Corey Northcutt, Chief Optimization Officer at Orbit“Most of the popular GEO myths — the list of which already seems endless — are about “off-page” work. There are no “signals” like with SEO. No scores, no math, no webspam counterweights. Not yet, anyway. Where AI results are not driven by organic search, it’s purely about word frequency and proximity. Here, the most biased result almost always wins. Look at a few hundred of your own prompts, and you’ll quickly find Reddit and Wikipedia citations to be less noticeable than in Google. Instead, it’s a small, actionable list of niche 3rd party affiliate websites, sponsored endorsements, or other biased actors that you can easily prioritize.” |

It’s true that AI responses aren’t always based on searches. That brings us to our final topic today…

What does AI know about your brand from memory without searching?

We just made the case for very targeted efforts (directories, review sites, listicles, analysts) but what about all of the other sources? What about news media and mainstream press? What about press releases? What about Forbes? What about those high-domain authority websites that SEOs love?

There is definitely a place for traditional PR in your AI-search strategy.

Because sometimes, AI doesn’t search. Sometimes it just relies on its pre-training, spots certain players and recommends them. Big brands are big in AI responses because they’re big in the pre-training. Remember, the “P” in ChatGPT stands for “pretrained.”

What does ChatGPT know about your brand from its pre-training?

There’s an easy way to find out.

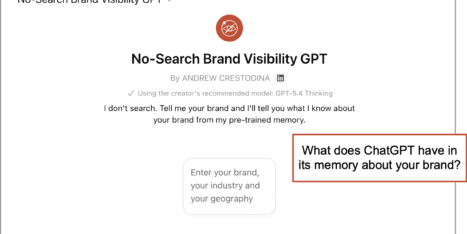

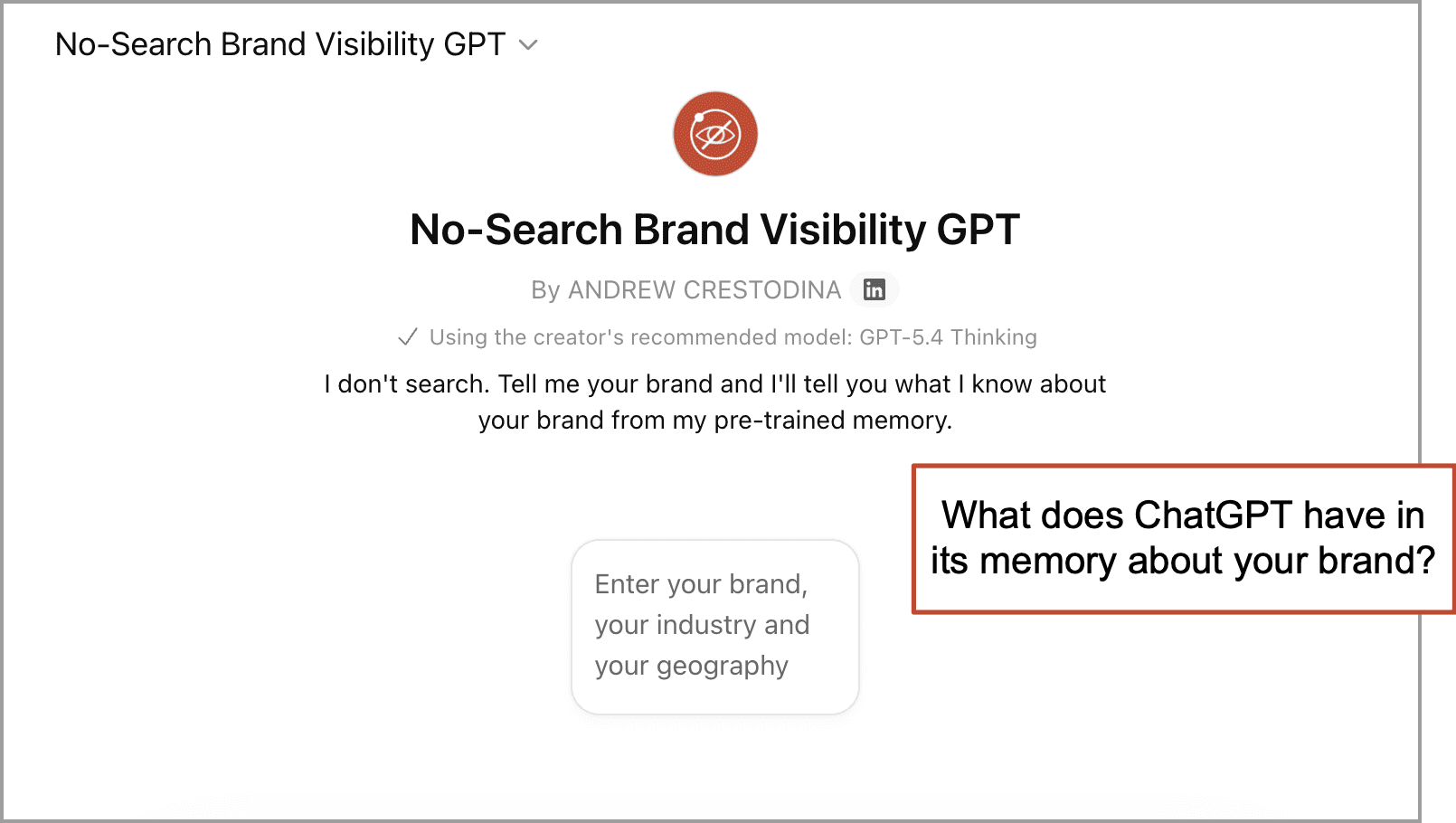

You could simply ask ChatGPT about your brand and tell it not to search, but that isn’t reliable. It’s hard to stop it from searching. The best way to look into ChatGPT’s memory is to make a custom GPT with “Web Search” turned off.

We made one that you can use 👉 Orbit’s No-Search Brand Visibility GPT.

It’s very simple. Just enter your brand and find out what AI remembers about it without searching. It’s a strange tool because it’s LESS functional than the default AI. We removed one AI’s main features!

This is not a perfect look inside pre-training, but it reveals what the model says when live web search is not available. It’s a clean, non-search test. It must rely on what it already knows.

And if it doesn’t know much, traditional PR will help. Press placements are huge in the training data. Likely, reputable media is more heavily weighted than company websites. And the way to become famous in the mind of ChatGPT is the same as it is offline: tell your best stories through credible sources. That’s PR.

Colophon

The prompts in this article were created using our own “Prompt Collaborator GPT” over the course of eight hours and dozens of exchanged messages. Rather than asking the AI to simply generate these prompts, I started with a long conversation about the general concept. I asked many open-ended questions. Many times, the AI provided valuable ideas for improvement. This final version was consolidated from five prompts down to three, then we tweaked and tweaked so the outputs are practical and nicely formatted. Tests were run across multiple brands, industries, and AI models.

This method is almost like a little piece of software. Indeed, it may partly replace tools you’re paying good money for.

Someday, we’ll make an article and video showing how to build your own prompt collaborator and work with it to create your own highly functional, multiprompt AI methods.