Little things make a big difference. In digital marketing, this is especially true. Digital is about doing a hundred little things right.

Some of those things relate to search engine optimization and rankings. And some mistakes in this area cause huge, deal-breaking, rank-destroying disasters.

This post is a quick roundup of some of the worst SEO mistakes we’ve seen. These are the little things that can sink the ship, pulling you down in search engines, down to the abyss of SEO irrelevance.

Mistake #1: Removing Your Own Site From Google

What’s the opposite of search friendly? Search rude. Here’s how to be rude to Google and tell them to ignore your website.

This is a surefire way to sink your own site.

There’s a venue here in Chicago that books all kinds of shows. People love it. But ask Google for showtimes and you get this…

That’s right. This is what that little message says…

A description for this result is not available because of this site’s robots.txt

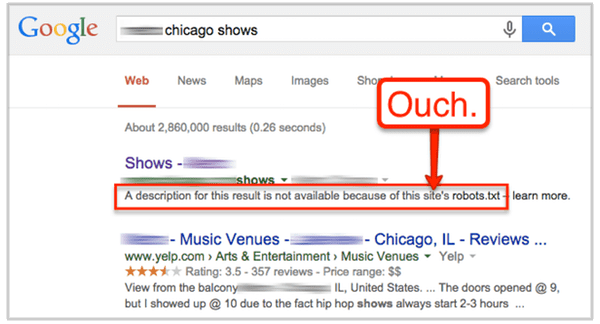

The robots.txt file is just a place to talk to search engines. Every site has one (or should). To see yours, just go to www.YourWebsite.com/robots.txt. While you’re there, make sure it doesn’t look like this.

This file is telling every search engine (User-agent: *) to ignore everything (Disallow: /).

That’s bad.

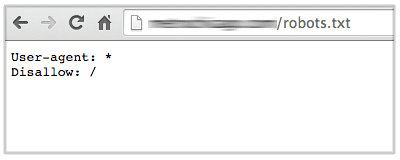

This is an especially big SEO mistake because Google is now so good at helping people find showtimes. This is what it could look like.

Nice, right? A beautiful, simple way to help your audience find out what’s showing.

Actually, the biggest SEO mistake also appears in robots.txt files: “noindex.” This will completely remove your site from Google. You won’t show up at all.

This could be useful to add to an extranet or a login area. But generally, you don’t want to torpedo a marketing website.

The Fix:

Make sure your robots.txt file allows Google to properly crawl and index your website. Here’s a simple guide to robots.txt best practices that might help.

Let’s move on to the next potential disaster.

Mistake #2: Targeting Phrases No One Is Searching For

Ranking for a phrase that doesn’t bring in traffic might feel good, but really it’s just vanity.

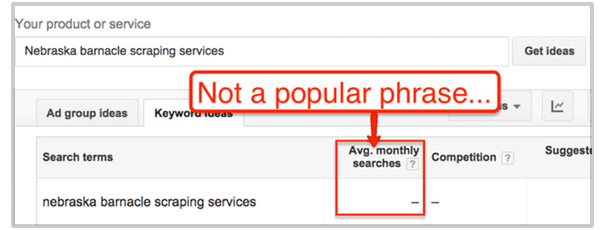

Enter the possible keyphrase into the Google Keyword Planner. If you see a dashed line, rather than a number, then the phrase was searched for fewer than 10 times per month on average over the last 12 months.

This doesn’t actually mean zero, it just means very low. If there is other evidence that there is demand for the topic (for example, Google suggests the phrase when you type related phrases into the search box) then it still might be worth targeting.

The Fix:

Target phrases when you have some indication that people are searching for them, either because they have more than “-” searches according to the Keyword Planner or they are suggested search phrases in Google.

Ready to dig into keyword research? Here’s our 10-step guide to increasing targeted traffic using keywords. It explains everything.

Mistake #3: Targeting Phrases That Are Too Competitive

This is a more common SEO mistake: targeting the super popular phrase that you don’t have a chance for. When does it make sense to target a low-volume phrase? When the more popular phrases are too competitive.

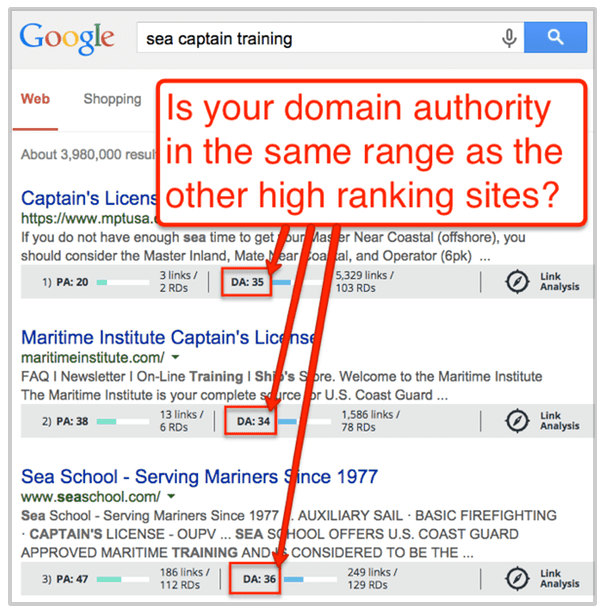

Here’s what most people don’t understand about search engine optimization: If the other high-ranking web pages for the target keyphrase are much more authoritative than yours, you don’t have a chance of ranking.

Target a keyphrase only if your authority in the same range as the authority of the high-ranking websites.

- How can you check your own authority? Use Link Explorer.

- How can you check the authority of the high-ranking sites for the phrase? Install MozBar (a Chrome extension). Turn it on and search for the phrase.

The Fix:

Pick your battles. Every phrase is a different competitive set. Some topics are extremely competitive (and worth millions of dollars) and others are relatively simple to rank for, taking little effort.

If you’re not in their league, target a different phrase!

Want to learn more about how domain authority works? We made a video for you here.

Once you’ve picked your phrases, make sure to avoid this next SEO mistake.

Mistake #4: Keyword Stuffing

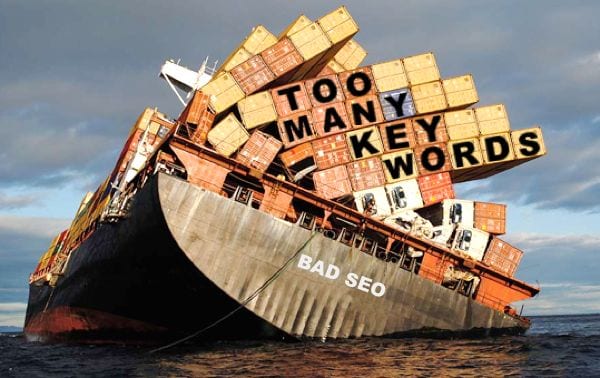

The problem with keyword stuffing is that keyword stuffing is very obvious to search engines, which notice keyword stuffing very easily and can penalize web pages stuffed with keywords.

Keyword stuffing and keyphrase stuffing is also bad for visitors, who may read a page stuffed with keywords and think it’s strange that the webpage has so many of the same keywords.

So stuffing and filling pages with keywords and keyphrases is bad for search engine optimization (SEO) and for visitors.

Mistake #5: Generic Home Page Title Tag

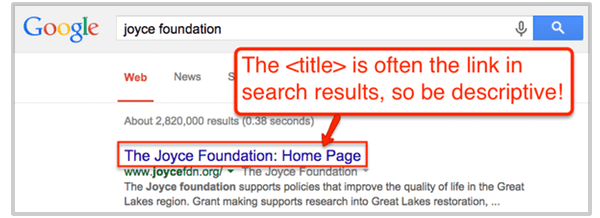

The title tag of your homepage is the single most important piece of SEO real estate on your website. If your website were a book, this would be the text on the cover.

It’s important not just for ranking high, but for getting clicks if you do rank. It affects click through rates because it often appears as the link in Google search results.

The all time worst home page title tag? You guessed it… “home”

Why? It says nothing about what you do. It doesn’t help you rank or communicate with potential visitors.

The Fix:

Write a title that tells Google and people what you do. Here are some quick guidelines.

- Include a keyphrase for the main category for your type of business

- Include the company name at the end, after the keyphrase

- Is no more than 55 characters (if it’s longer, it will get truncated)

Related: 12 Content and Web Design Mistakes Made During a Website Redesign

Mistake #6: Not Excluding Search Robots from Your Analytics

Some of your traffic is actually search engine robots, not human visitors. For most sites, I don’t believe the (out-of-date) research that suggests that 61% of traffic is robots, but it is possible that your Analytics are a bit skewed.

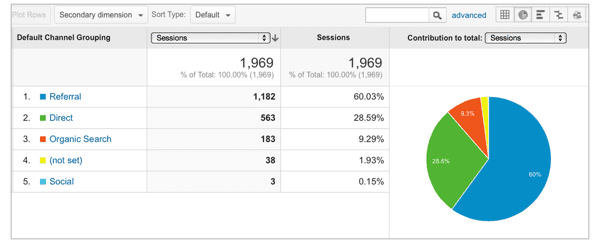

If you have a very low traffic site, it’s possible that your Analytics is completely overrun. Look at the Analytics for this site:

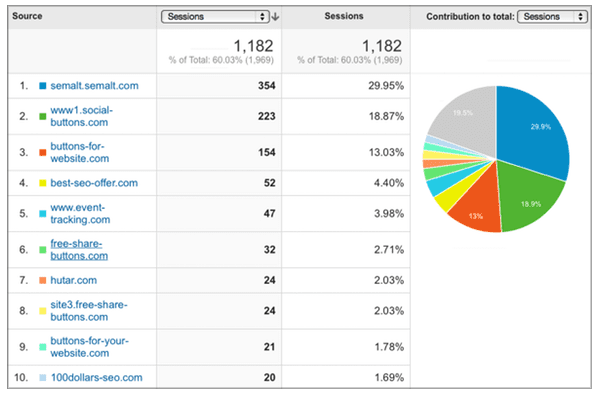

60% of the visitors are referrals. They’re coming from other websites. Now let’s look at which sites they’re coming from:

Looks fishy. Are those are real visitors coming from 100dollars-seo.com and best-seo-offer.com? Doubtful. These are robots. So this is an example where 60% of the traffic really is from robots!

The Fix:

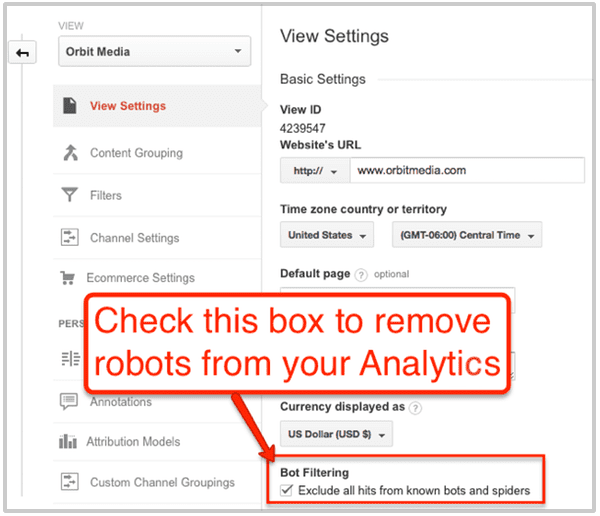

Getting the bots out of your Analytics is easy. Just go to the View Settings in the Admin section and check the box under “Bot Filtering.”

This might not remove “ghost bots” and “zombie bots” (scary, right? more on that here) but it will remove all the robots known to the IAB Spiders & Robots Policy Board (yes, that’s a thing).

Sink or Swim?

Search rankings are like everything else in digital marketing: it’s a hundred little things that make the difference. Hopefully, checking these eight on your site will make a difference between floating up and sinking down.

How many of these had you already checked? Anything we missed? Add your insights below and your fellow readers might thank you…